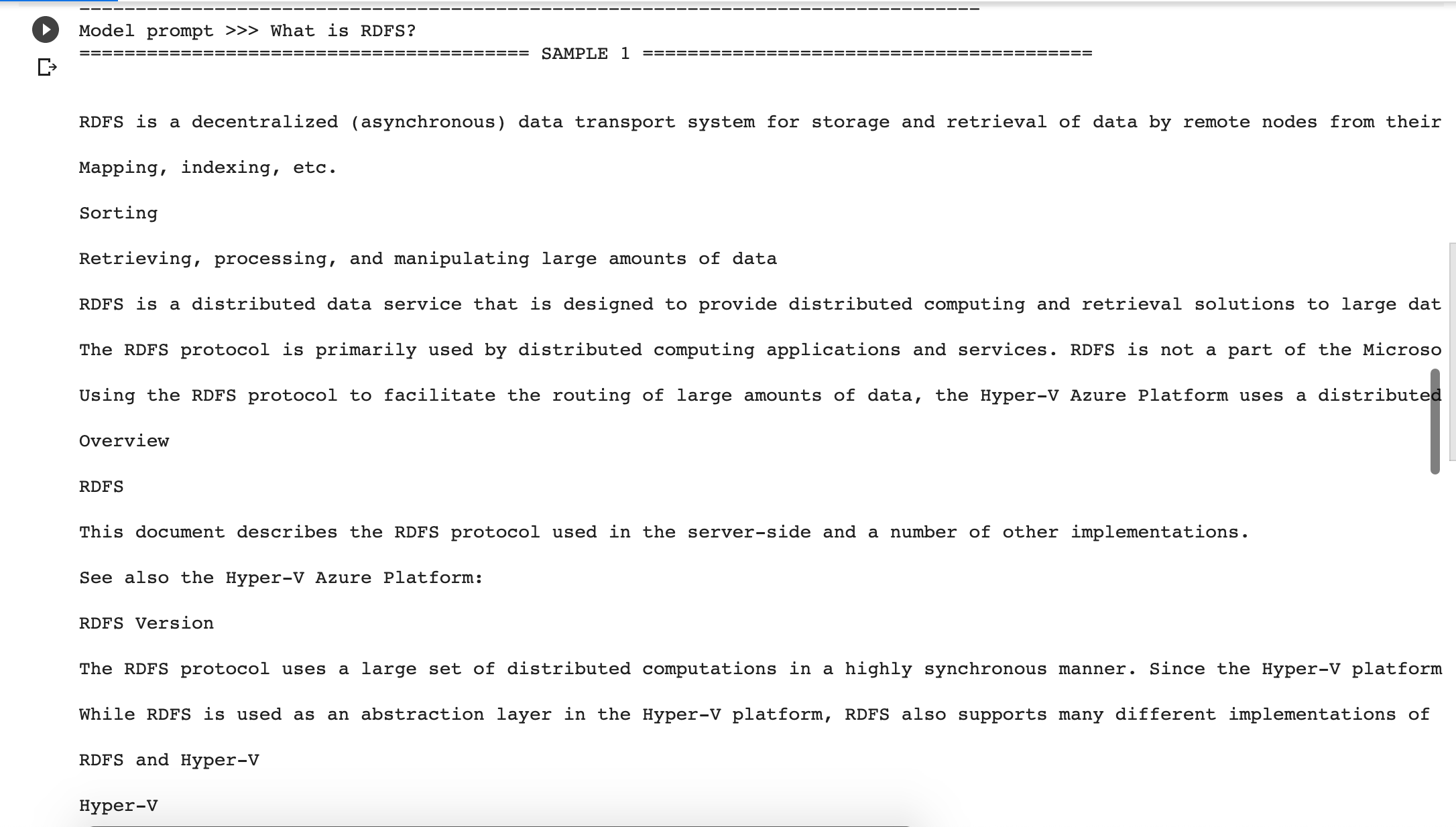

The one for the convolutional layer might be the ‘heaviest’, because we have to implement the case of splitting and not splitting in one function. Now that we have the basic class structure, lets define some helper functions for creating the layers. The load_initial_weights function will be used to assign the pretrained weights to our created variables. We could do all in once, but I personally find this a much cleaner way. In the _init_ function we will parse the input arguments to class variables and call the create function. create () def create ( self ): pass def load_initial_weights ( self ): pass WEIGHTS_PATH = weights_path # Call the create function to build the computational graph of AlexNet WEIGHTS_PATH = 'bvlc_alexnet.npy' else : self. IS_TRAINING = is_training if weights_path = 'DEFAULT' : self.

(if bvlc_alexnet.npy is not in the same folder) weights_path: path string, path to the pretrained weights,

skip_layer: list of strings, names of the layers you want to reinitialize num_classes: int, number of classes of the new dataset keep_prob: tf.placeholder, for the dropout rate x: tf.placeholder, for the input images Let’s first look onto the model structure as shown in the original paper:Ĭlass AlexNet ( object ): def _init_ ( self, x, keep_prob, num_classes, skip_layer, weights_path = 'DEFAULT' ): """ But don’t worry, we don’t have to do everything manually. This is the same thing I defined for BatchNormalization in my last blog post but for the entire model. To start finetune AlexNet, we first have to create the so-called “Graph of the Model”. Anyway, here you can download the already converted weights. I tried it on my own and it works pretty straight forward. Luckily Caffe to TensorFlow exists, a small conversion tool, to translate any *prototxt model definition from caffe to python code and a TensorFlow model, as well as conversion of the weights. Caffe does, but it’s not to trivial to convert the weights manually in a structure usable by TensorFlow. Unlike VGG or Inception, TensorFlow doesn’t ship with a pretrained AlexNet. I’ll explain most of the steps you need to do, but basic knowledge of TensorFlow and machine/deep learning is required to fully understand everything. Although I recommend reading the first part, click here to skip the first part and go directly on how to finetune AlexNet.Īnd next: This is not an introduction neither to TensorFlow nor to finetuning or convolutional networks in general. Basically it is divided into two parts: In the first part I created a class to define the model graph of AlexNet together with a function to load the pretrained weights and in the second part how to actually use this class to finetune AlexNet on a new dataset.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed